My research interest includes technologies and industry-oriented solutions to speech processing: recognition, diarization, emotion recognition, spoof detection; speaker processing: recognition, verification, representation. I also have some experience in working with and delivering Large Language Models on the application level. I have had a few publications at the top-ranked conferences in speech and signal processing and technologies (ICASSP, Interspeech).

🎉 News in 2025

- (10th June) I got accepted to a funded PhD position at Trinity College Dublin, Ireland! I will be supversied by Prof. Naomi Harte.

- (19th May) Two first-authored papers are accepted at Interspeech 2025!

- (25th Jan) I started doing my internship at I2R, A*STAR Singapore under a project scholarship. I will be supervised by Dr. Tran Huy Dat.

📖 Educations

- 2025 - 2029 (Incoming), Doctor of Philosophy, School of Engineering, Trinity College Dubilin.

Topic: Multimodal speech recognition and understanding. - 2020 - 2025 (Now), Bachelor in Data Science & AI, Hanoi University of Science and Technology.

📝 Publications

* denotes equal contribution.

Count=6

2025

Pushing the Performance of Synthetic Speech Detection with Kolmogorov-Arnold Networks and Self-Supervised Learning Models

Long-Vu Hoang*, Phuong Tuan Dat*, Tran Huy Dat. Interspeech 2025.

TL;DR

These findings suggest that incorporating KAN into SSL-based models is a promising direction for advances in synthetic speech detection.

Acoustic scattering AI for non-invasive object classifications: A case study on hair assessment

Long-Vu Hoang*, Tuan Nguyen*, Tran Huy Dat. Interspeech 2025.

TL;DR

When an incident wave interacts with an object, it generates a scattered acoustic field encoding structural and material properties. By emitting acoustic stimuli and capturing the scattered signals from head-with-hair-sample objects, we classify hair type and moisture using AI-driven, deep-learning-based sound classification. The experimental results highlight acoustic scattering as a privacy-preserving, non-contact alternative to visual classification, opening huge potential for applications in various industries.

VoxVietnam: a Large-Scale Multi-Genre Dataset for Vietnamese Speaker Recognition  HuggingFace

HuggingFace

Hoang Long Vu, Phuong Tuan Dat, Pham Thao Nhi, Nguyen Song Hao, Nguyen Thi Thu Trang. IEEE ICASSP 2025.

TL;DR

This paper introduces VoxVietnam, the first multi-genre dataset for Vietnamese speaker recognition with over 187,000 utterances from 1,406 speakers and an automated pipeline to construct a dataset on a large scale from public sources.

2024

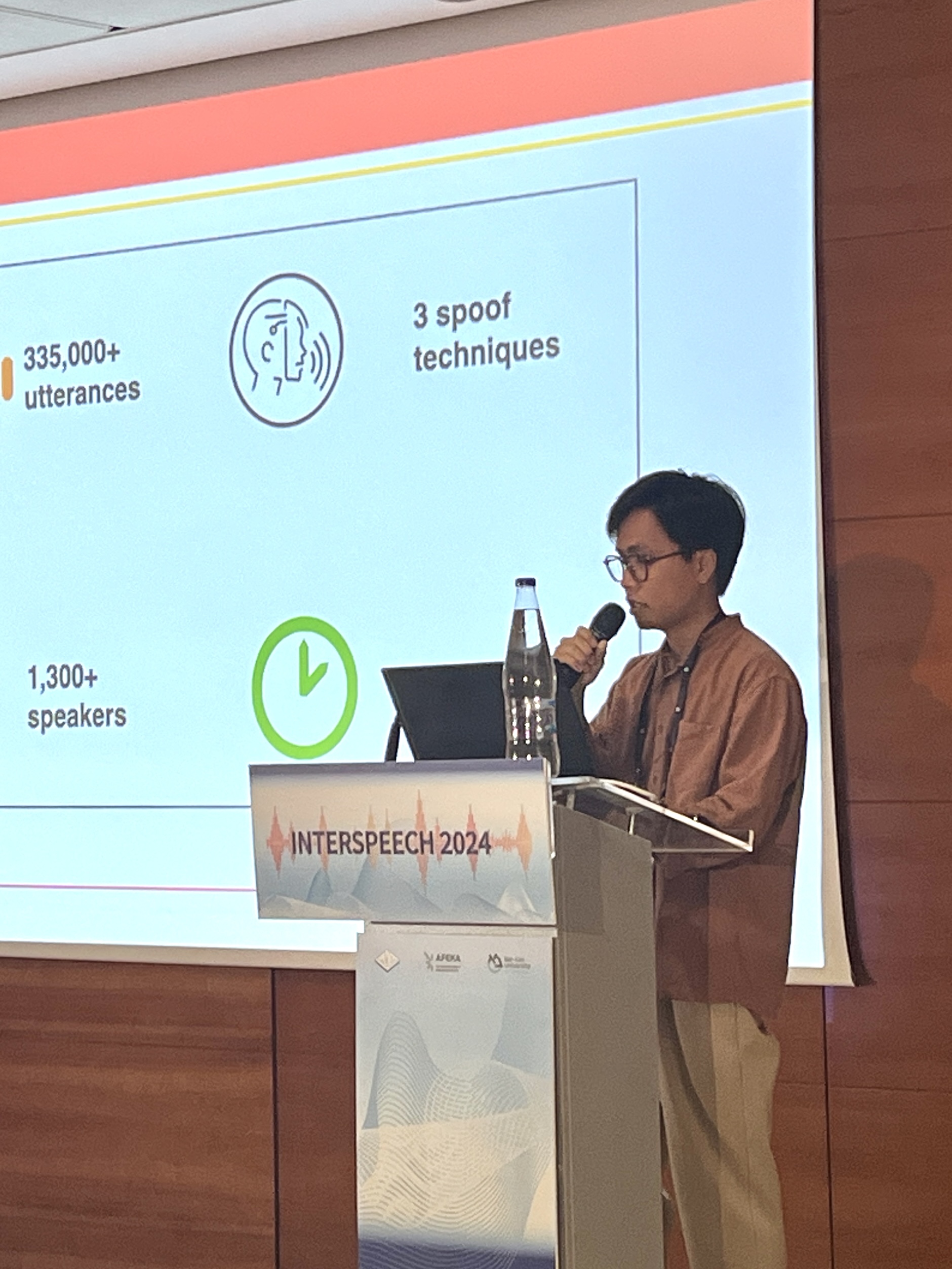

VSASV: A Vietnamese dataset for spoofing-aware speaker verification  HuggingFace

HuggingFace

Vu Hoang*, Viet Thanh Pham*, Hoa Nguyen Xuan*, Nhi Pham, Phuong Dat, Thi Thu Trang Nguyen. Interspeech 2024 (Oral).

TL;DR

The dataset comprises over 174,000 spoofed utterances and 164,000 authentic utterances from 1,382 speakers, generated with 3 techniques: voice conversion, replay, and adversarial attack.

MSV challenge: Language-adversarial training for indic multilingual speaker verification

Vu Hoang*, Nguyen Van Huy*, Ngo Thi Thu Huyen*, Viet Thanh Pham. Journal of Computer Science and Cybernetics.

TL;DR

2023

Vietnam-Celeb: a large-scale dataset for Vietnamese speaker recognition HuggingFace

HuggingFace

Pham Viet Thanh, Nguyen Xuan Thai Hoa, Hoang Long Vu, Nguyen Thi Thu Trang. Interspeech 2023.

TL;DR

This paper presents a large-scale spontaneous dataset gathered under noisy environments, with over 87,000 utterances from 1,000 Vietnamese speakers of many professions, covering 3 main Vietnamese dialects.

📝 Preprints

2025

Qwen vs. Gemma Integration with Whisper: A Comparative Study in Multilingual SpeechLLM Systems

Long-Vu Hoang*, Tuan Nguyen*, Tran Huy Dat. Interspeech 2025 Challenge Submission.

TL;DR

We combine a fine-tuned Whisper-large-v3 encoder with projector architectures different LLMs for decoding. We employ a three-stage training methodology. Our best system outperforms the organiser's baseline by 17.55% and finished at top 15.

🎖 Honors, Awards and Activities

- Mar. 2025 I was given the President Certificate of Merit from HUST for students with excellent results and active contributions.

- Jun. 2024 I was nominated by HUST to join the 2024 National University of Singapore (NUS) Young Fellowship Programme. My team and I won the Best Pitching Award for the Effects of Generative AI on PhD research.

- From 2022 to 2024, I participated and organised various challenges for Speaker Verification task, as a part of Vietnamese Language & Speech Processing (VLSP) annual workshops.

💻 Internships and Research Collaborations

- 01/2025-Now I2R, A*STAR, Singapore.

Reference: Dr. Tran Huy Dat, Senior Principal Scientist, Head of Audio Analytics and Speech Recognition. - 10/2022-Now HuSTeP Lab, Hanoi, Vietnam. This is a speech technology research lab at HUST, founded by Dr. NGUYEN Thi Thu Trang. I was the research assistant and lab manager between 10/2023-01/2025.

Reference: Dr. Nguyen Thi Thu Trang, Senior Lecturer at SoICT, HUST. - 10/2023-01/2025, Vbee JSC., Hanoi, Vietnam.

- 08/2023-10/2023, AIV Group, Hanoi, Vietnam.